Introduction

When it comes to automating builds for any project that I undertake, my goto OS is usually Linux. Generally I find the deployment of build nodes easier to deploy and manage and usually cheaper than their Windows counterparts. The problem with this of course is Windows based software generally needs cross-compiling in someway or other. For C/C++ based tooling my goto build system is CMake and MinGW which makes cross compiling for Windows relatively easy.

When is comes to C# tool building on Linux we have the ability to use the dotnet core SDK. With the release of the Microsoft.NETFramework.ReferenceAssemblies nuget package, targeting most legacy .NET Frameworks is a breeze. A quick dotnet msbuild invocation within your C# project directory will generally yield executables that will run on Windows targeting the older framework versions as opposed to the newer .NET Core technology stack.

Unfortunately things start to fall apart when it comes to offensive C# tooling. With the ability to load and execute .NET assemblies through C2 frameworks such as Cobalt Strike, compilation usually involves the step of merging dependant assemblies into a monolithic exe ready for execution. Up until recently, my preference was to use Costura. Costura is a Fody extension that will compress and merge assemblies inside the main executable and uncompress and load from memory on demand during execution. That’s great, so what’s the problem? Since Costura is a build time dependency, on Linux it is executed under .NET Core during the build process. The net effect of this is additional .NET Core references will get pulled into your final assembly and commands such as execute-assembly will no longer work with assemblies cross-compiled from Linux due to the addition of .NET Core assembly references.

dnMerge Primer

I had a play around with MSBuild and developed a new build plugin called dnMerge. It works exactly like Costura where reference assemblies are compressed and merged during compilation but with the added benefit of retaining execute-assembly support when cross-compiling on Linux. Another benefit of dnMerge over Costura is the use of the LZMA compression algorithm over the traditional deflate algorithm used by Costura. When using Costura, a simple C# program with dependencies on cobbr’s (and other contributors) amazing SharpSploit library will result in a merged executable file a touch over 1MB. This eliminates the possibility of using execute-assembly inside Cobalt Strike due the 1MB or below hard limit on a single data transfer. With dnMerge the same project results in an executable size of around 800K which is usable with Cobalt Strike’s execute-assembly.

Using dnMerge in your project is as easy as adding the NuGet package from the central nuget.org repo. Debug builds are left alone and unmerged to allow easy debugging, but release builds will automatically compress and merge dependant assemblies ready for use with execute-assembly.

Below is a quick Microsoft SDK project template to get you started that can be used to build within Visual Studio or the dotnet Core SDK on Linux. Alternatively, add the dnMerge NuGet package to your project and build.

I have been using dnMerge for a couple of months now and it is also used for merging assemblies for BOF.NET. But if you do encounter any problems using within your own project, please let me know by creating an issue on GitHub.

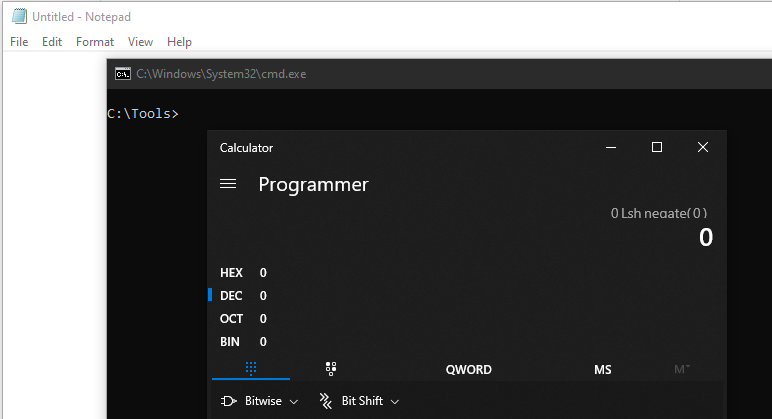

dnMergeSample.csproj

<Project Sdk="Microsoft.NET.Sdk">

<PropertyGroup>

<OutputType>Exe</OutputType>

<!--<TargetFrameworks>net40;net35</TargetFrameworks>-->

<TargetFrameworks>net40</TargetFrameworks>

<RepositoryUrl></RepositoryUrl>

<PackageProjectUrl></PackageProjectUrl>

<GeneratePackageOnBuild>false</GeneratePackageOnBuild>

<AssemblyName>dnMergeSample</AssemblyName>

<RootNamespace>dnMergeSample</RootNamespace>

</PropertyGroup>

<ItemGroup>

<PackageReference Include="dnMerge" Version="0.5.13">

<PrivateAssets>all</PrivateAssets>

<IncludeAssets>runtime; build; native; contentfiles; analyzers; buildtransitive</IncludeAssets>

</PackageReference>

<PackageReference Include="Microsoft.NETFramework.ReferenceAssemblies" Version="1.0.2">

<PrivateAssets>all</PrivateAssets>

<IncludeAssets>runtime; build; native; contentfiles; analyzers</IncludeAssets>

</PackageReference>

</ItemGroup>

<PropertyGroup Condition="'$(Configuration)|$(TargetFramework)|$(Platform)'=='Release|net45|AnyCPU'">

<DebugType>none</DebugType>

<DebugSymbols>false</DebugSymbols>

</PropertyGroup>

<PropertyGroup Condition="'$(Configuration)|$(TargetFramework)|$(Platform)'=='Debug|net45|AnyCPU'">

<DebugType>Full</DebugType>

<DebugSymbols>true</DebugSymbols>

<DefineConstants>DEBUG</DefineConstants>

</PropertyGroup>

</Project>

The full project source can be found on my GitHub repo.

Credits

dnMerge uses the brilliant dnLib library for .NET assembly modifications. Without this library dnMerge would not be possible.

7-Zip’s LZMA SDK is used for compressing and uncompressing merged assemblies.